AI is no longer simply driving demand for compute. It is restructuring the entire infrastructure stack — from chips and optical interconnect to manufacturing capacity, network architecture, and supply chain strategy.

As hyperscale AI clusters continue scaling, the optical communication industry is entering a new phase where connectivity becomes foundational infrastructure rather than supporting technology.

The first phase of the AI boom focused heavily on compute performance.

Today, the bottleneck is moving outward into infrastructure-level challenges, including:

This transition is rapidly changing the industry conversation from “faster chips” to “faster systems.”

At the center of this shift is optical interconnect technology.

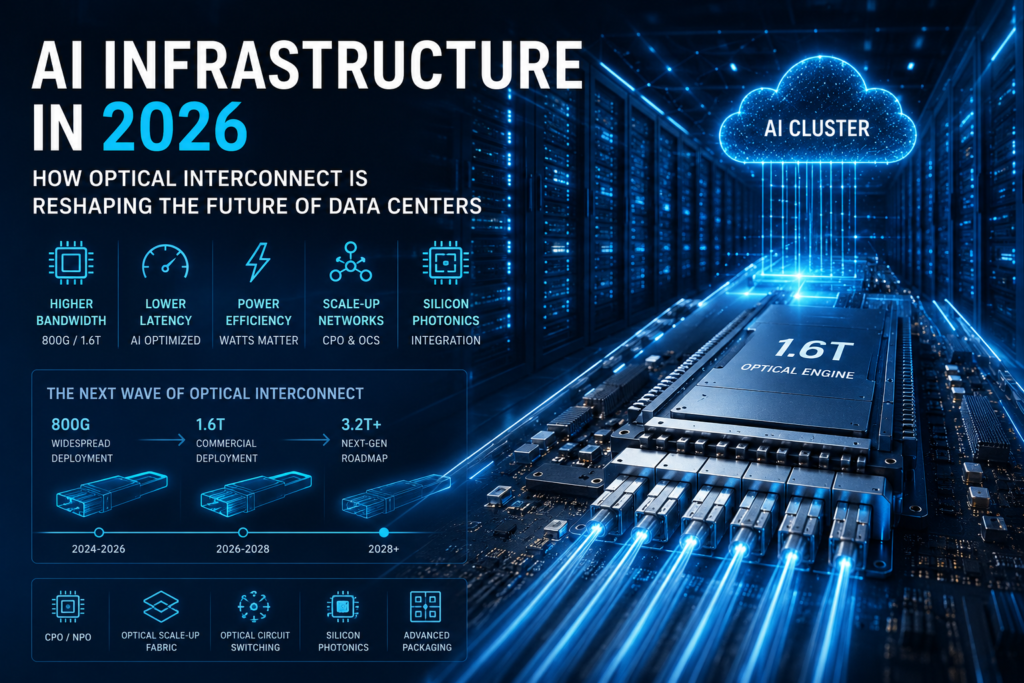

According to recent market trends, 800G deployments continue accelerating through 2026, while 1.6T optical connectivity is beginning to move from roadmap planning into real deployment.

This is not simply another upgrade cycle—it represents a structural redesign of modern AI data center architecture.

Historically, interconnect was treated as background infrastructure.

That assumption is now breaking down.

In modern AI clusters, connectivity increasingly determines:

This explains why hyperscalers are investing heavily in:

The launch of the OCI (Optical Compute Interconnect) initiative by companies including NVIDIA, AMD, Broadcom, Microsoft, Meta, and OpenAI reflects a major industry transition: optics is moving closer to the compute layer itself.

Future roadmaps already point toward optical interconnect speeds scaling to 3.2 Tb/s per fiber in next-generation AI systems.

The impact of AI infrastructure expansion is no longer limited to optical module vendors.

AI demand is now influencing:

Some optical communication and semiconductor segments are already experiencing 40%–65% year-over-year growth driven by AI infrastructure demand.

At the same time, production capacity is becoming a major bottleneck.

Advanced-node foundry capacity remains constrained globally, while AI accelerators continue consuming increasing amounts of wafer allocation and advanced packaging resources.

This is why the semiconductor story is no longer only about technological leadership—it is increasingly about ecosystem coordination and supply chain execution.

One of the clearest trends emerging in 2026 is vertical integration.

Leading AI companies are no longer optimizing only compute hardware. They are optimizing the entire infrastructure stack, including:

This creates growing tension between:

For years, hyperscale infrastructure focused heavily on interoperability and multi-vendor flexibility.

However, AI economics increasingly favor tightly integrated systems optimized for:

As a result, optics is becoming one of the most strategic layers inside future AI infrastructure.

The industry still frames AI infrastructure as an innovation race.

Increasingly, however, the real limitation is operational scalability.

Three major constraints are emerging:

Advanced-node wafer supply remains constrained globally, while AI demand continues accelerating.

Higher rack density is pushing cooling and power delivery into system-level architectural territory.

As AI clusters scale, network fabrics become significantly harder to manage across:

This means the next competitive phase may not be won by the company with the fastest chip, but by the company capable of building the most scalable infrastructure ecosystem.

While AI infrastructure investment continues accelerating aggressively, traditional telecom infrastructure markets are evolving differently.

Industry reports indicate that telecom infrastructure growth is becoming increasingly efficiency-focused rather than hype-driven.

This suggests a broader market divergence:

This shift may significantly influence infrastructure investment priorities over the coming years.

Several trends are becoming increasingly clear across the industry.

800G deployments will continue scaling rapidly through 2026 and beyond.

1.6T is no longer theoretical. Ecosystem readiness is accelerating across hyperscale AI environments.

CPO, NPO, and optical scale-up fabrics will continue evolving toward tighter integration with GPUs and accelerators.

Future competition may increasingly focus on bandwidth-per-watt rather than raw bandwidth alone.

Manufacturing partnerships, advanced packaging access, and foundry allocation are becoming core competitive advantages.

AI infrastructure is entering its second phase.

The first phase was about compute acceleration.

The next phase is about system scalability.

In this transition, optical interconnect is no longer just a supporting technology—it is becoming foundational infrastructure for the AI era.

Over the next five years, the companies that succeed may not simply be those with the fastest hardware.

They will be the ones capable of coordinating:

All at the same time.

Related articles

Share the page:

Contact US

If you want to know more about us, you can fill out the form to contact us and we will answer your questions at any time.

We use cookies to improve your experience on our site. By using our site, you consent to cookies.

Manage your cookie preferences below:

Essential cookies enable basic functions and are necessary for the proper function of the website.

These cookies are needed for adding comments on this website.

These cookies are used for managing login functionality on this website.

Statistics cookies collect information anonymously. This information helps us understand how visitors use our website.

Google Analytics is a powerful tool that tracks and analyzes website traffic for informed marketing decisions.

Service URL: policies.google.com (opens in a new window)

Clarity is a web analytics service that tracks and reports website traffic.

Service URL: clarity.microsoft.com (opens in a new window)

You can find more information in our Cookie Policy and Privacy Policy for ADTEK.